To the government, the online harms reduction regulator (OHRR) bill is a noble and essential endeavour to sanitise the internet. Issues such as terrorism, child suicide cults, snuff movies, and John Bercow fan sites are deeply troubling and certainly meet the “reasonable person” test of harmful. Mean words that are unkind, but not illegal, not so much.

Nicky Morgan, the former secretary of state, said that she wanted an internet that is “trusted by and protects everyone in the UK“, which is just a bit odd. It comes from a good place – politicians would like to be able to reassure parents who’ve seen their children implode after sustained cyberbullying, or relatives reasonably outraged that loathsome trolls can watch their loved ones for entertainment, that something can be done.

For example, they believe that providers of platforms for user-generated content like YouTube, Facebook, Twitter and others should have clear standards – and enforce those standards for their users or face fines and prosecutions from Ofcom.

Many of the expected standards are likely going to be imposed. The bill lists eight categories deemed harmful, including terrorism, dangers to kids, and hate crimes, but the last of which is “any other harms Ofcom deem appropriate“. This would grant powers of censorship to the regulator unseen since the state used to disapprove of books and plays.

The bill also covers “people who are not users of that service but may be affected by it“, which is all planet earth, even if they’ve never clicked a mouse. The potential for abuse of power is extreme, and this should be crystal clear to ministers.

Worry not, says the government in its “initial consultation response”, we get it, we respect your freedom of expression, we’re so down with that. All we want is to have a “risk based” and “proportionate” response to, you know, bad stuff.

And don’t worry about Ofcom, you won’t even see them, they’ll be like ghosts; so behind the scenes they won’t even deal with individual complaints. They’ll just drag a few TikTok officials into a room for a quiet word now and again. And besides, they have “a proven track record of experience, expertise and credibility”, so that’s cool. They’re so super smart that I’m only surprised they haven’t all applied to work in No 10 already.

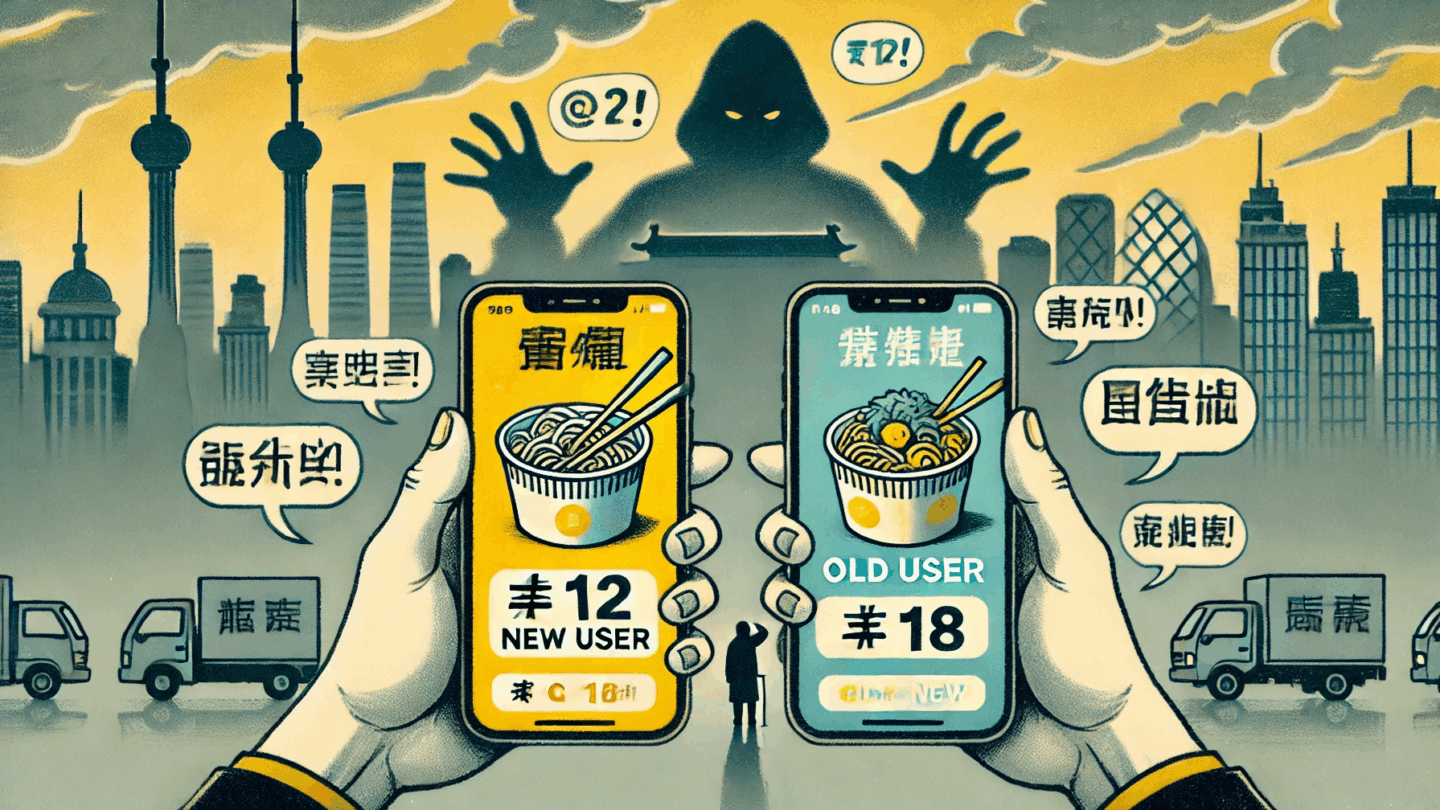

Like the regulation of elections, charities and information, the system is deliberately designed to create an ever-present sense of arbitrary threat such that internet companies will over-regulate themselves. This is the strong signal that grieving parents may want, but legislation based on assuaging grief is never a good idea.

The set-up is so broad it repeats a mistake made in the pre-internet 1986 Public Order Act, where an attempt to regulate “threatening, abusive or insulting words or behaviour, or disorderly behaviour” led, in 2006, to the police trying to fine a student for calling their horse “gay”. It led eventually to the word “insulting” being amended out of that law in 2013. This regulatory power indirectly brings it back, and in possibly a much worse way.

The government makes many mistakes. They assume that regulators are either uniquely wise or have a sense of proportion that sits independently to and outside the temporary swings in the public mood. In reality, they are ordinary people who respond to political pressure and make political decisions, sometimes in their own interest. This problem is a well-known matter of public choice economics. Failure to see it for what it is suggests a naivety about how the government works so extreme it would be flabbergasting – were it not for these recent examples of the issue in practice.

For example, since 2016, the Electoral Commission’s £0.5m pursuit of Darren Grimes for ticking the wrong box on a form amounted to little more than a fit of pique by conspiracy theorists mourning an election they could not reverse. The Charity Commission’s attempt to suppress pro-trade commentary on Brexit by Legatum and the IEA, while letting pro-alignment commentary from the IPPR go unmolested, reeks of political bias.

Vote Leave was eventually fined by the information commissioner after two years of trying to pin something on them, broadly for deleting data they were supposed to delete but was then required as evidence to counter the politically motivated complaints.

This does not speak to divine impartial wisdom of regulators. They are human, and the government is about to give one of them the power to arbitrarily determine what words, sounds and images could be harmful to anyone, anywhere, on a worldwide basis, without recourse to parliament or the courts.

In doing this, the government appears to have lost all faith in the power of markets to self-regulate and market actors to respond to the concerns of their users and customers. It appears oblivious to the risk of regulatory creep – that, once enacted, Ofcom will just keep accumulating rules and powers. Or scope creep – that attempts to confine the costs of enforcement to large platforms will come up against the reality the issues migrate to smaller platforms, which will then create the pressure to include them as well.

This combination will impact innovation and investment, raising costs until only the large incumbents can afford the regulatory burden. This is what happens time and again when governments over-regulate, with the best of intentions, from energy and cars to live-saving drugs.

The government has also ignored how human behaviour will contribute to the expansion of powers. Many people on social media regard disagreement with their opinions as hate speech, and there are many toxic debates filled with threats of harm. This proposed system of reactive fines is a recipe for platforms to be bombarded with vexatious complaints which they will have to take seriously, erring on the side of censorship, lest they miss the one reasonable case.

The government then appears to believe that wise regulators can precisely second guess what the public doesn’t want, balance it perfectly against other interest, such as freedom of speech or stimulating innovation and investment, and create a lemon-fresh internet, so shiny and clean that grateful masses will flock to it to spend their now state-approved bitcoins. Woe betide you if you prefer slightly grubby oranges and want to set up a service to share them in this stunning and brave new world.

Ofcom, your new hobby horse is gay. Please don’t shut down 1828 for letting me say so.